AI + iPhone = software development on the go

Exploring the possibilities of using an iPhone as a legitimate development machine with the help of AI tools like GitHub Copilot, and how this combination might shape the future of mobile development.

I've always wondered if I could turn my iPhone into a legitimate development machine

Not to build the next unicorn app from scratch, but to see if I could act on inspiration anywhere and ship actual code without touching a laptop. This week, I put that to the test.

The Setup

It started with a MagSafe tripod I bought from TikTok shop. (I'm a sucker for stuff like that. Utility!) It's made for a great viewing experience when watching videos, but naturally I wanted to push it further. I threw in a wireless keyboard to see if I could create a full-fledged mobile computing experience.

I started simple: taking notes while watching YouTube lectures. The physical keyboard made a huge difference in my note-taking speed and accuracy. Adding a wireless mouse was a game-changer for text editing and navigation. With both peripherals connected, my iPhone felt like a proper workstation. The familiar click of keys and precise cursor control made me forget I was working on a phone.

To enable mouse support on your iPhone:

- Open Settings

- Navigate to Accessibility > Touch > AssistiveTouch

- Under Devices, pair your Bluetooth mouse

Note: Mouse support works best in portrait mode. Landscape mode support is currently limited.

With the hardware sorted, it was time to see if this setup could handle actual dev work—not just note-taking. That meant picking a real, shippable change.

The Real Test: Shipping Code

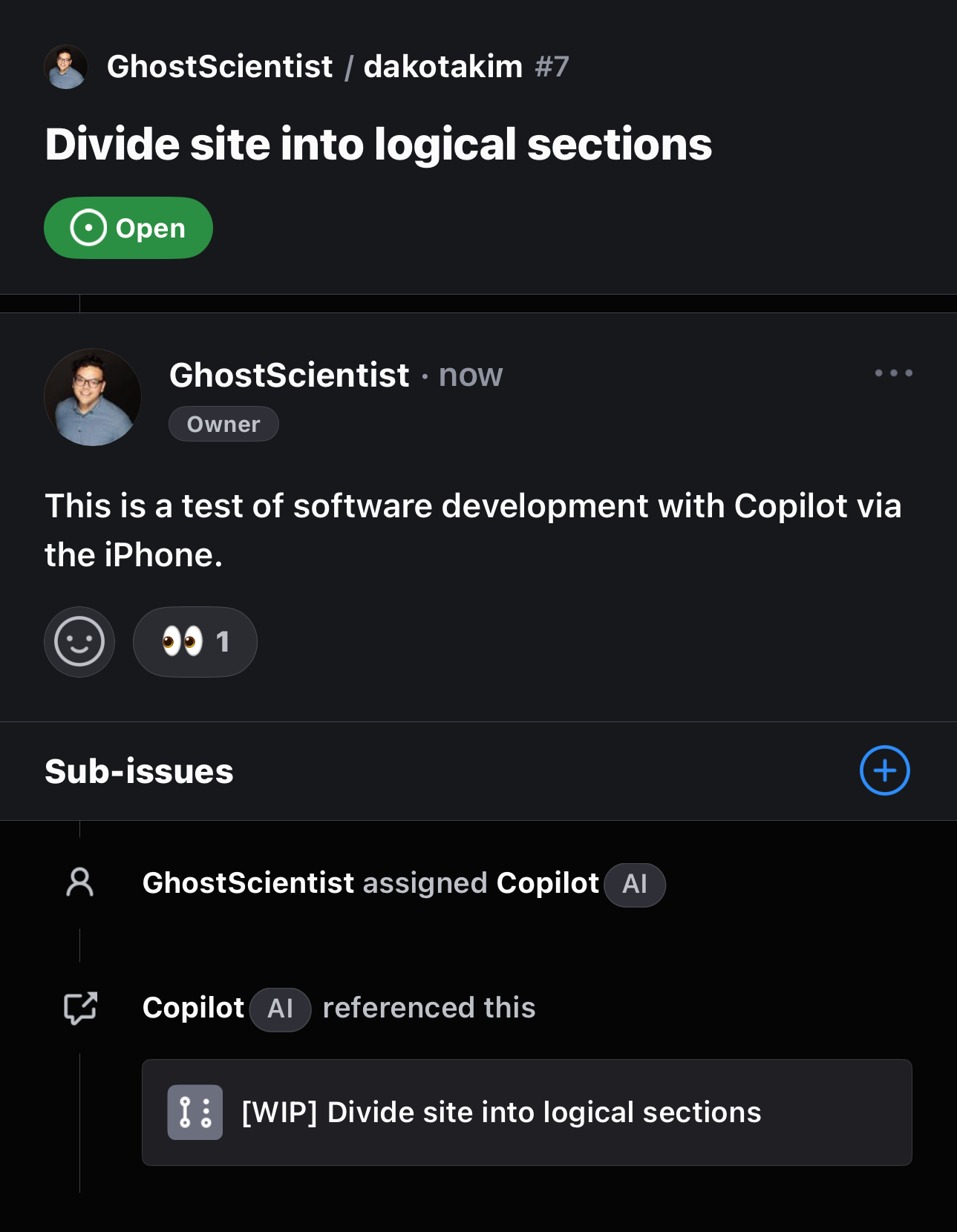

Being a programmer, I naturally opened the GitHub app and wanted to try updating my website. I chose a simple task: logically group the buttons on my site by topic. There are many ways to accomplish this – using Codespaces, editing the RAW file directly on GitHub, or most recently: getting help from GitHub Copilot.

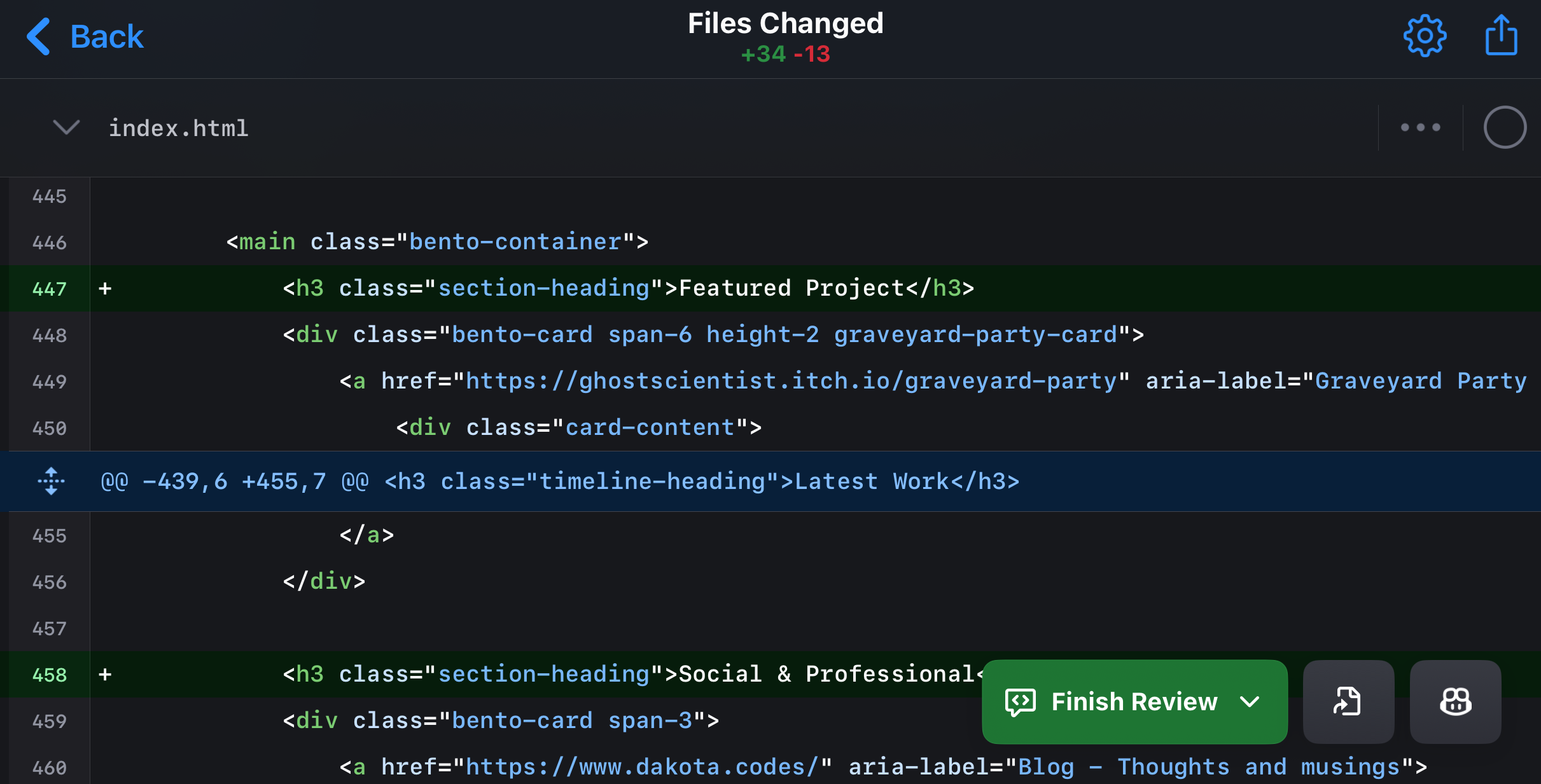

The keyboard and mouse helped, but let's be real: coding on a phone screen isn't ideal. This limitation led to an insight: mobile development works better as orchestration than direct coding. Instead of fighting the small screen, I leaned into automation. GitHub Copilot would handle the heavy lifting while I focused on direction and review.

The Agentic Workflow

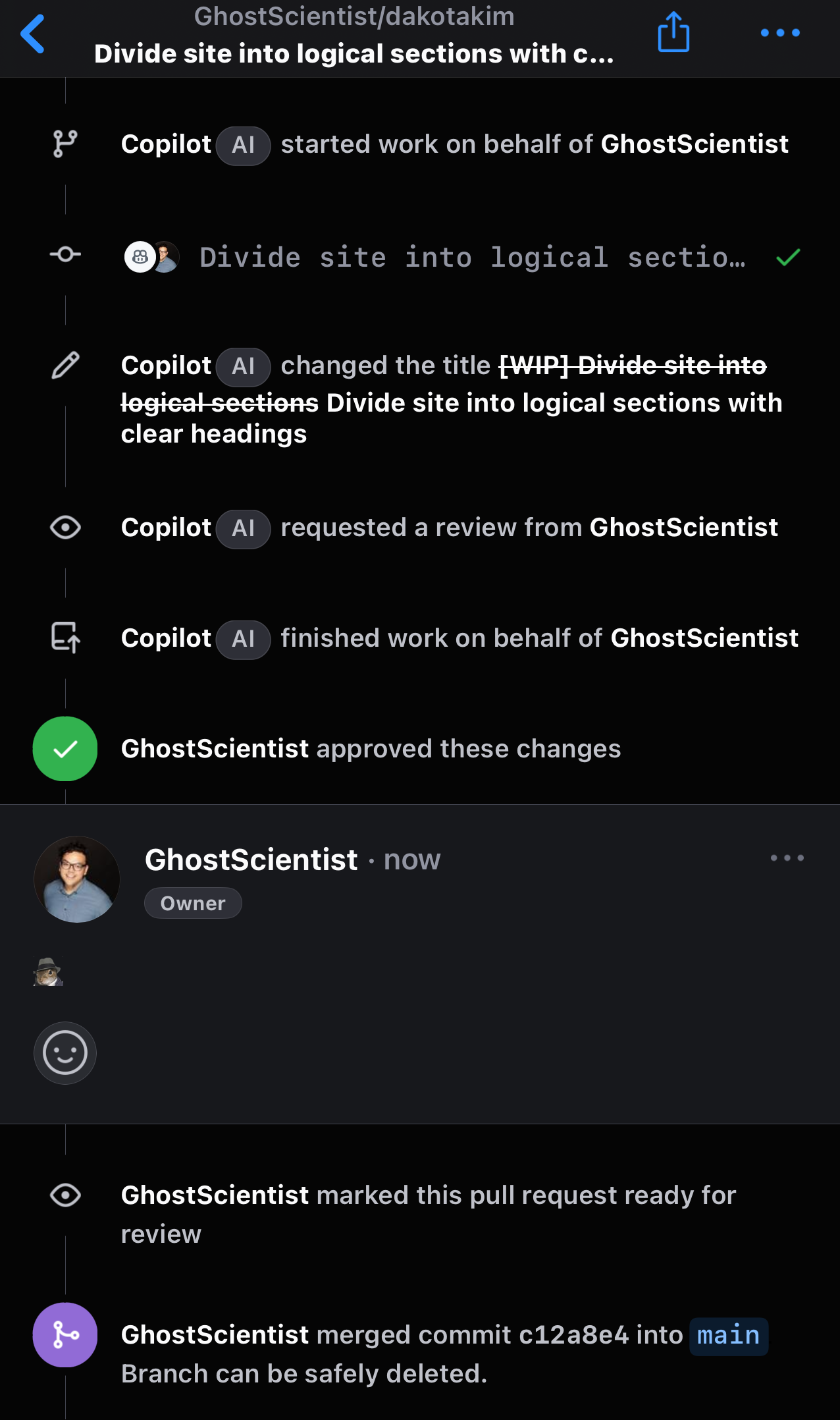

The "agentic" part came from treating Copilot less like autocomplete and more like a teammate. I created an issue, handed it the requirements, and let it plan, implement, and open a PR while I stayed in the role of reviewer and product owner.

Working on mobile pushed me to write better requirements. Instead of "group the buttons," I specified: "Create logical sections for project showcase, blog posts, and social links, maintaining the current styling but improving navigation hierarchy." When Copilot's first attempt kept too much inline styling, I refined the prompt to emphasize our CSS class conventions. The small screen became an advantage—it forced me to think through changes completely before executing them.

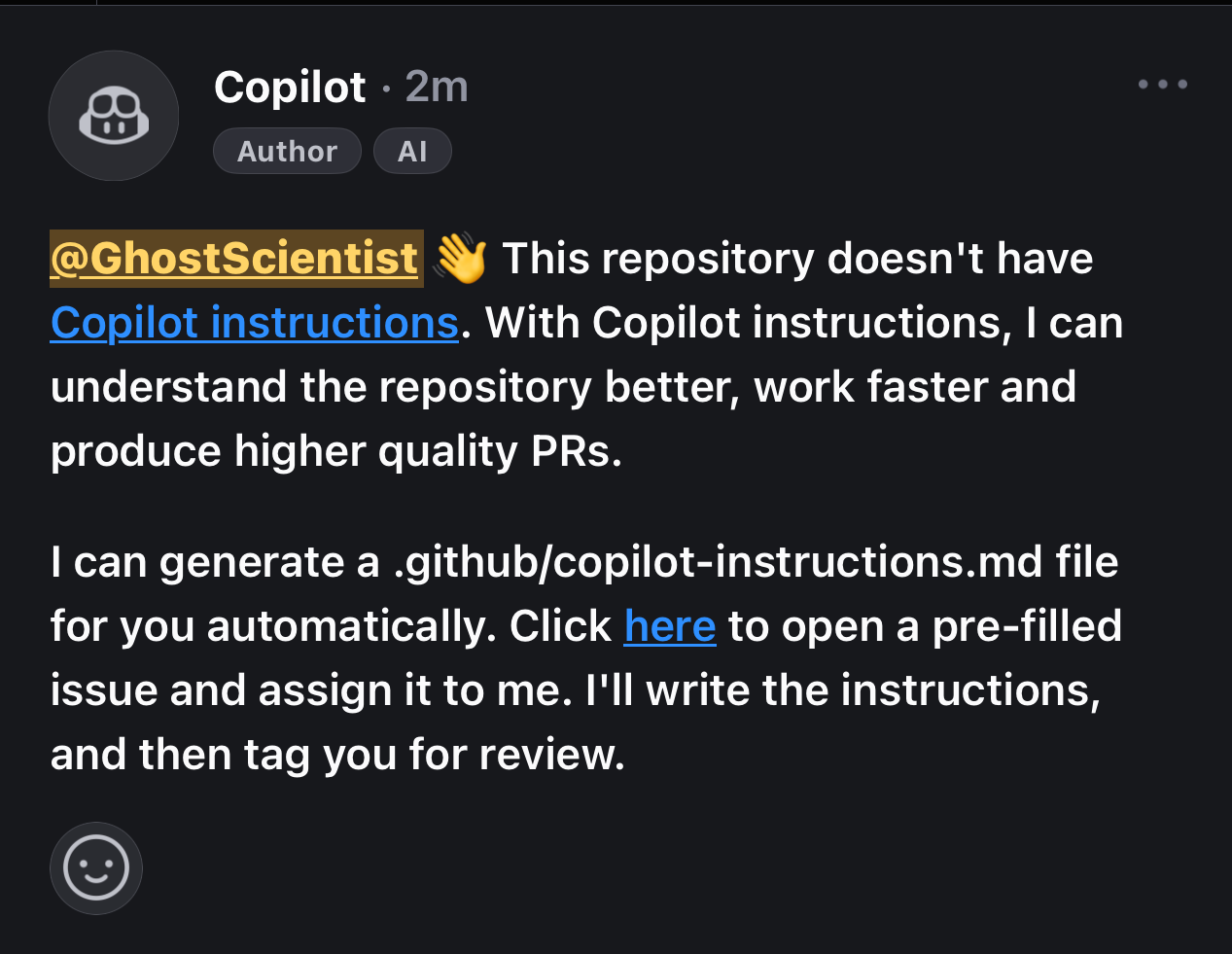

After creating my grouping issue, I was prompted to have Copilot generate a .github/copilot-instructions.md file – essentially teaching it about my project's conventions and preferences. This investment pays dividends in future work sessions, as Copilot gets better at understanding your codebase and style.

Why This Actually Works

Automated pipelines were crucial to making this work. My site is a simple static build, so Netlify's PR preview deployments let me see the actual changes live. I could review both code and the running site right from my phone.

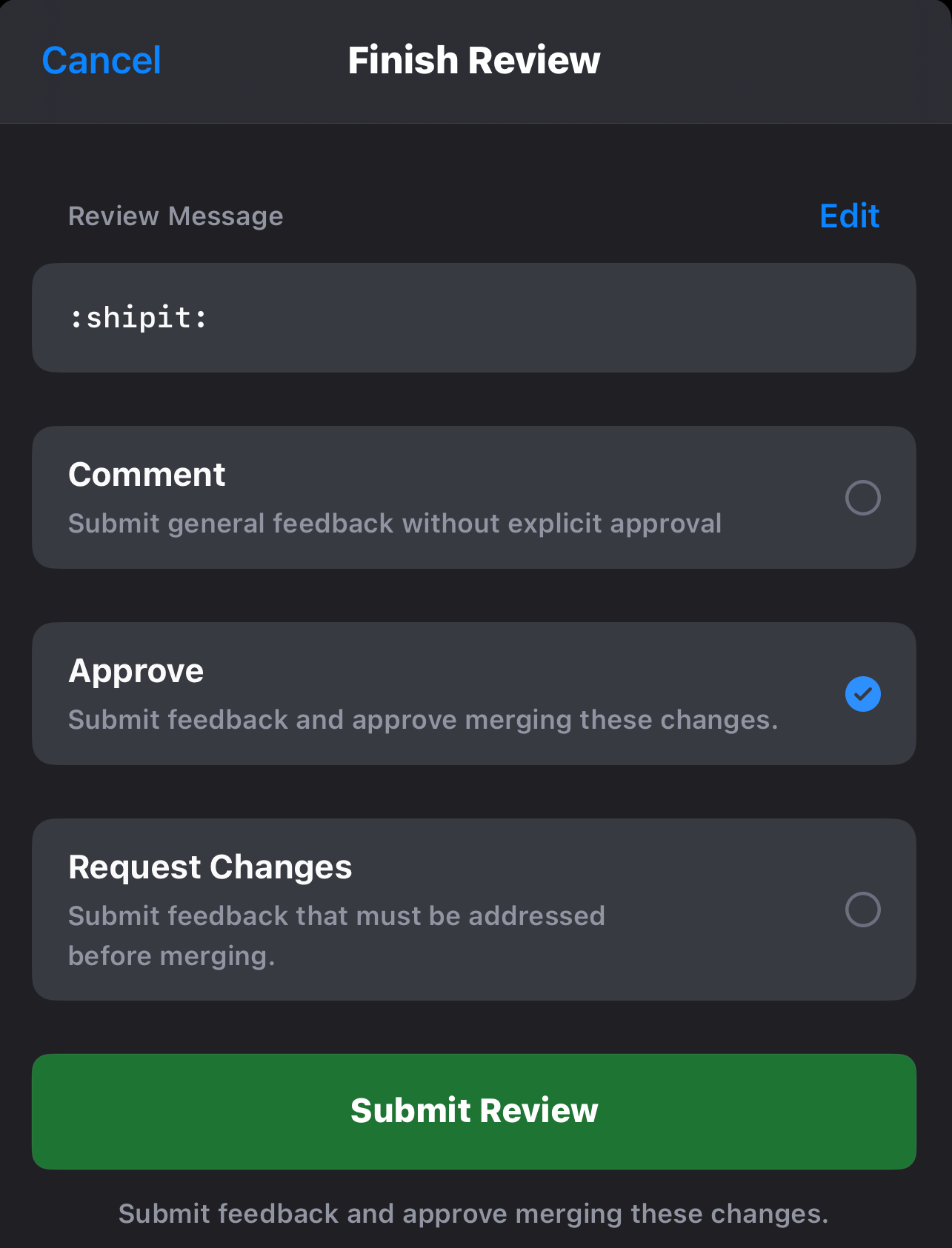

The ability to check the deployed preview gave me confidence in the changes. A few taps to approve and merge, and my site was updated. From initial idea to production deployment, everything happened on my iPhone.

The Bigger Picture

Despite this being a trivial example using an iPhone, it illustrates something profound about where mobile development is heading. While I used iOS for this experiment, the core concept works just as well on Android—modern phones have the power and tooling to be legitimate development platforms. For the right project with the right verification setup, any smartphone + AI assistance can become a real development environment.

I always have ideas strike me when I'm out and about, and now I can return to my desk with more than just notes. I can have actual, deployed foundations for those ideas already in place. The combination of mobile computing (regardless of platform) and AI agentic tooling isn't just a gimmick. It's a glimpse into a future where the boundaries between "real" development environments and mobile devices continue to blur.

This experiment led me to explore other mobile-first development tools. Omnara's AI Command Center particularly caught my attention. It's built specifically for managing AI agents from your phone. While GitHub Copilot helped me ship code changes, Omnara showed me a glimpse of managing entire AI workflows on the go. The ability to monitor agent performance and get instant notifications about needed input feels like a natural evolution of what I was trying to achieve with my setup.

The mobile platform isn't just for checking Slack or firing off commits anymore. It's becoming a viable hub for orchestrating full development cycles. The next time inspiration hits on a bus ride or in a coffee shop, I won't just take notes. I'll ship.

(Written entirely on my iPhone with that same tripod setup. The proof is in the shipping.)