Today I learned how to run the GPT-OSS-20B model locally on my Mac using Ollama, and integrate it with VS Code as my Agent mode's default model.

The Setup

- Install Ollama on your Mac

- Pull the model:

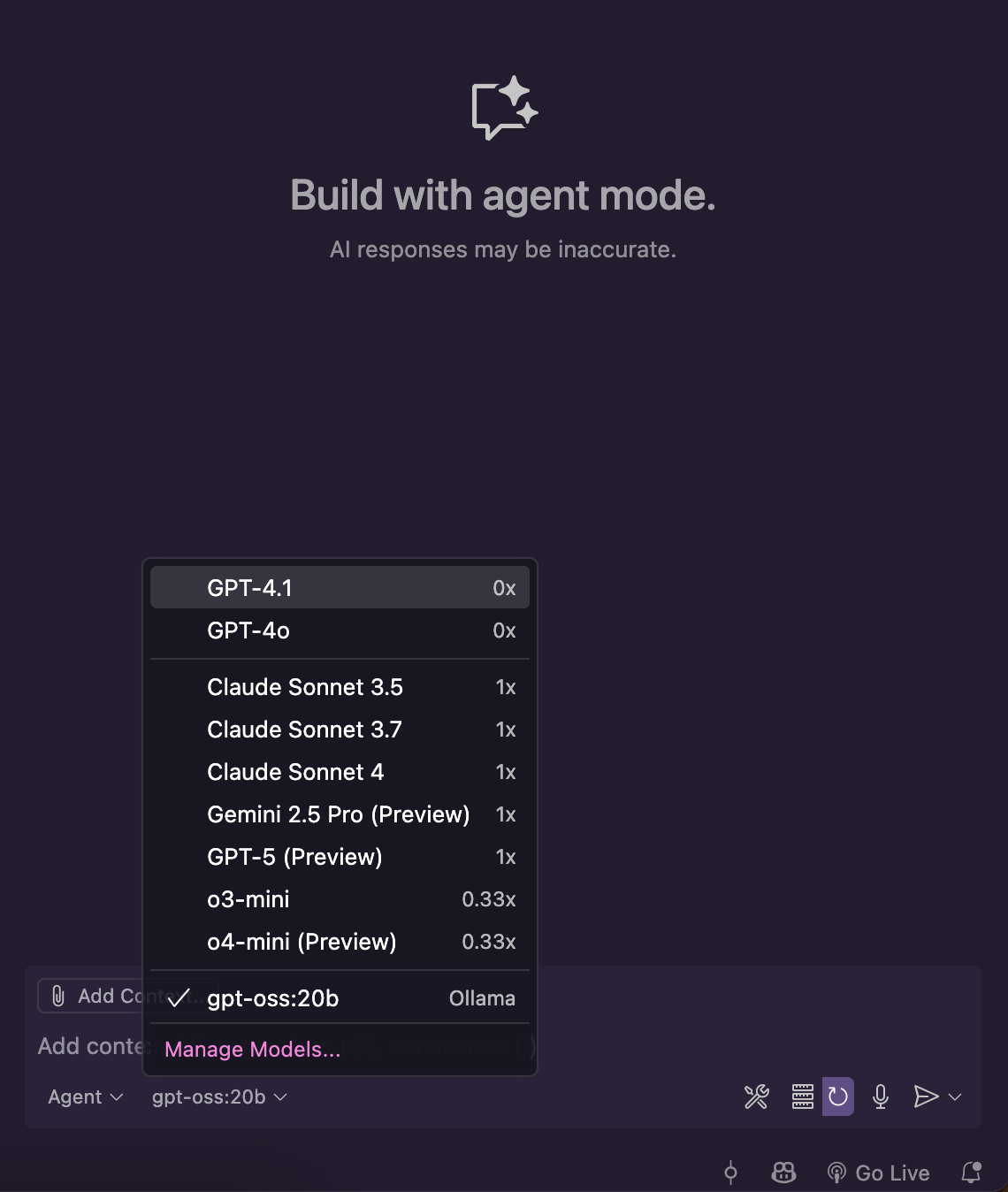

ollama pull gpt-oss-20b - Open VS Code, navigate to the Agent mode sidebar, and click the model name at the bottom.

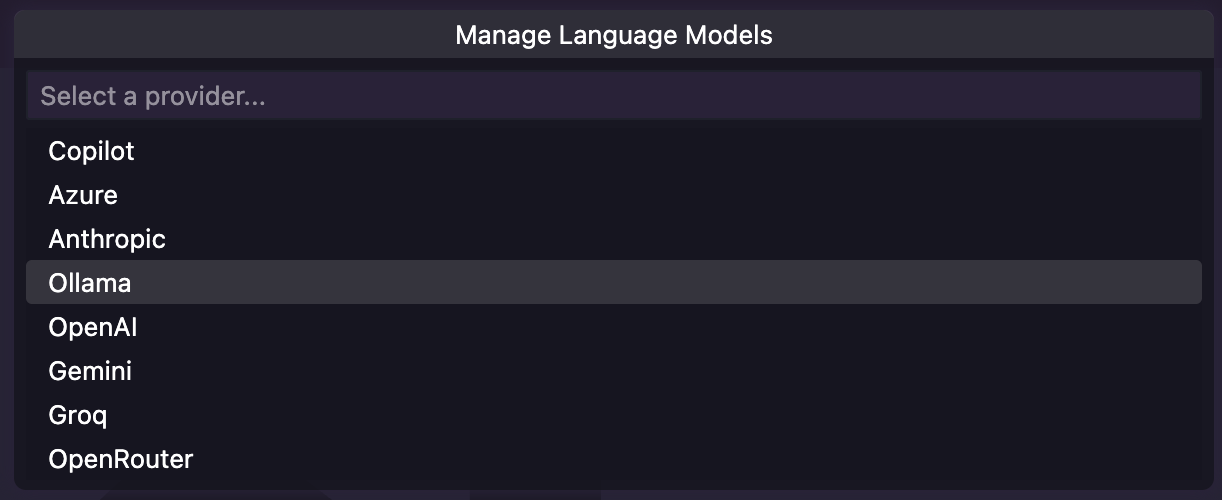

- Under Manage Language Models, select "Ollama"

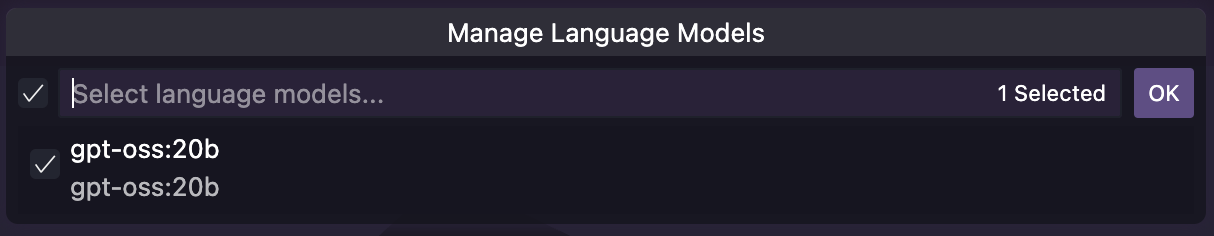

- Select "gpt-oss-20b" from the dropdown.

Why This Matters

- Full Control: You own it end to end

- Privacy: All inference happen locally on your machine

- Cost-Free: No API costs or subscription fees

- Flexibility: Can work offline and switch between different models as needed/released

Trade-offs

The main trade-off is speed - it's notably slower than cloud-based alternatives. However, this is expected to improve as:

- Local models become more optimized

- Hardware capabilities increase

- Model architectures evolve